In recent years, Berkeley has been touted in marketing campaigns as the Boba Capital of the Bay Area. It doesn’t sound that farfetched given that one neighborhood immediately south of the Cal campus has a reported 18 shops serving up this sippable treat within a 3-block radius. And I’ve seen social media coverage of shop openings with waits over an hour just in the past few months (1, 2), so it’s hard to argue that the city’s enthusiasm for these drinks has waned over time.

I’ll leave discussions of the broad cultural footprint of boba in the United States to others, but I do admit that boba has long held a special place in my heart. So, seeing how it’s Asian-American Pacific Islander Month and that boba has journeyed across the Pacific along side those who brought it, it’d be fun to gauge just how ubiquitous this beverage has become in the unique collge town.

Broad Objectives

Quantify the number of establishments that sell freshly made boba drinks in Berkeley, California, and visualize their layout across the city

Assess the ability of different tools (e.g. Google Places API, Google Gemini, OpenAI ChatGPT, and Microsoft Copilot) to provide a list of those vendors and their locations in a small number of requests

Data & Methods

The Google Places API was queried for “Boba or bubble tea in Berkeley, California”. To supplement the list, I used the search functions on Yelp and Doordash, searching for “boba” or “bubble tea” to identify any places not provided by the Places API. Any additional outlets found were then queried directly by name and city in the Places API, and the result added to the final list. In order to inform spatial mapping and analysis, I also searched other cities (Albany, El Cerrito, Emeryville, Oakland) and added outlets around the Berkeley city border.

I was also curious to see how LLM-based AI chatbots would perform in identifying these boba spots. I first asked available free versions of Google Gemini, ChatGPT, and Microsoft Copilot to generate a comprehensive list of Berkeley boba establishments. A similar second prompt followed with modified instructions to include any serving boba, not just ones specializing in it. Each (Gemini, ChatGPT, Copilot) has simple and more complex model variations available for use, so I tested each. Also, because of possible limitations in tokens used to generate responses, I tried chunked queries asking for 5 establishments each round to see if that improved their ability to identify the desired businesses.

Full List Request

Generate list of establishments currently in operation in Berkeley, California that sell boba/bubble tea. Just list the names and their latitude & longitude in csv format.

Generate list of establishments currently in operation in Berkeley, California that sell boba/bubble tea, including businesses that don’t specialize in it but do serve it. Do not duplicate any you have named in previous responses to me. If there are no more new outlets, you may end your listing at the last unnamed outlet. Just list the names and their latitude & longitude in csv format.

5-at-a-Time Request

Generate list of 5 establishments currently in operation in Berkeley, California that sell boba/bubble tea. Just list the names and their latitude & longitude in csv format.

Generate list of 5 establishments currently in operation in Berkeley, California that sell boba/bubble tea. Just list the names and their latitude & longitude in csv format. Do not duplicate any you have named in previous responses to me. If there are no more new outlets, you may end your listing at the last unnamed outlet.

Generate list of 5 establishments currently in operation in Berkeley, California that sell boba/bubble tea, including businesses that don’t specialize in it but do serve it. Just list the names and their latitude & longitude in csv format. Do not duplicate any you have named in previous responses to me. If there are no more new outlets, you may end your listing at the last unnamed outlet.

Out of irrational personal bias, I excluded locations that served crystal agar or popping boba, but not the more typical tapioca balls. My apologies to Alley Kitchens and.. Starbucks?? Though I will obviously not penalize any methodology for detecting those.

Mapping and spatial analyses were done in R using the sf, terra, ggspatial and osrm packages. Mapping layers were sourced from the California Open Data Portal and Open Street Map (OpenStreetMap contributors, 2024).

Results

Identification Proficiency

The API reported 24 operational locations within Berkeley city limits, but 1 was no longer in service, and 1 specialized in tea but did not offer boba. The additional manual searches (e.g. Yelp, Doordash, Uber Eats) yielded 17 more outlets, to complete the count of relevant boba service points at 41.

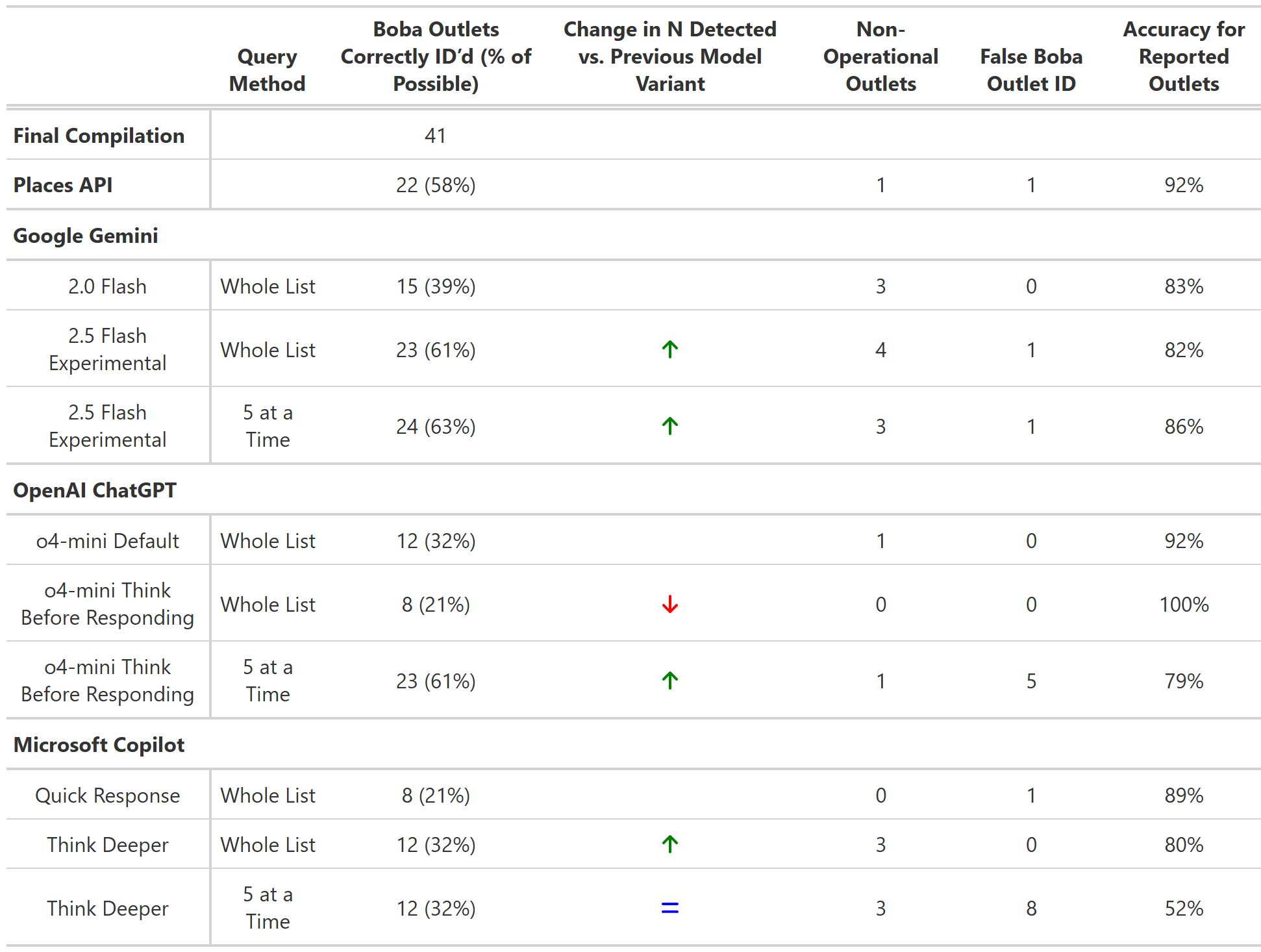

Table 1. Boba Outlet Identification Metrics by Methodology

A few observations regarding the LLM place retrieval:

3 iterations of the LLM requests identified more outlets (23-24) than the Places API (22), but they all also made more errors (4-6 compared to 2 from the API).

No consistent pattern across LLM requests was noted when comparing moves from simpler models to those utilizing longer processing time, and from full-list requests to 5-at-a-time requests.

Google Gemini: identified more establishments with the more intensive generation model, and identified a similar number with slightly improved precision when dealing with the request in chunks

ChatGPT: identified less establishments with the deeper reasoning model, but was much more successful with chunked requests

Copilot: identified a few more establishments with the deeper model, but the chunked requests produced more misidentifications without added true identifications

Coordinates were either not provided by models, and ones that were given tended to be inconsistent and often inaccurate by multiple blocks

| Places API Place Types1 | |

|---|---|

| Categories | N |

| Food | 33 |

| Restaurant | 19 |

| Store | 15 |

| Cafe | 12 |

| Bakery | 6 |

| 1 Multiple types listed for each establishment | |

Table 2. Boba Outlet Place Designations According to Google Places API

Most identified boba establishments were classified as “Food” establishments. Only 8 were lacking that designation, though searches indicate that at least 5 of those have sold food, at least until recently. A little less than half were tagged as restaurants, with cafe and bakery being less common categorizations.

Comparing those provided directly by the API with those added manually, restaurants were much more common in manual set (68%) than in the API-sourced set (27%).

Locations

Google Map of Boba Outlets in Berkeley

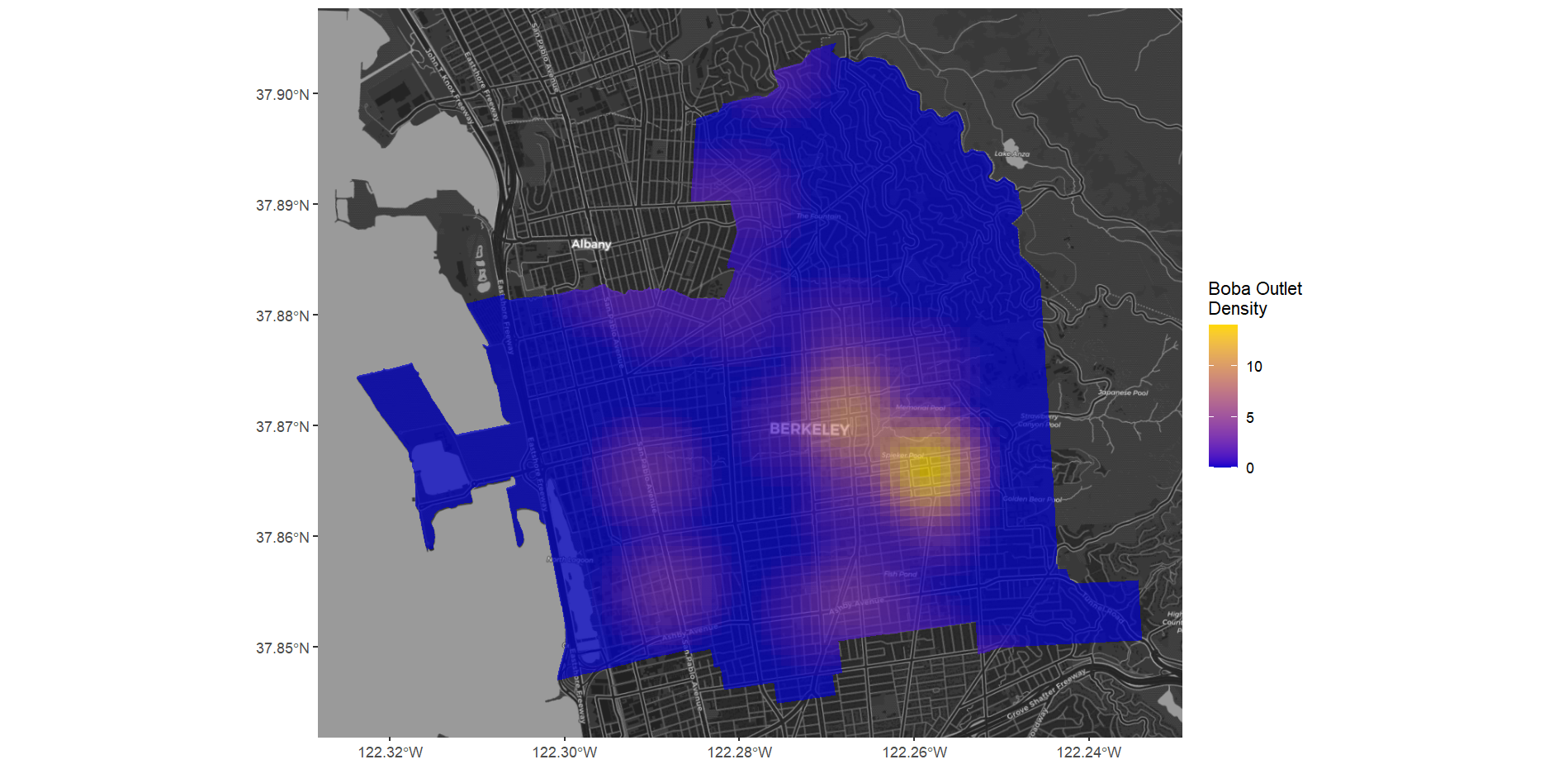

Boba outlets in the city were heavily concentrated in the commercial areas just south and just west of campus. Other commercial corridors, like San Pablo Avenue to the west and Solano Avenue to the north saw more modest prevalence.

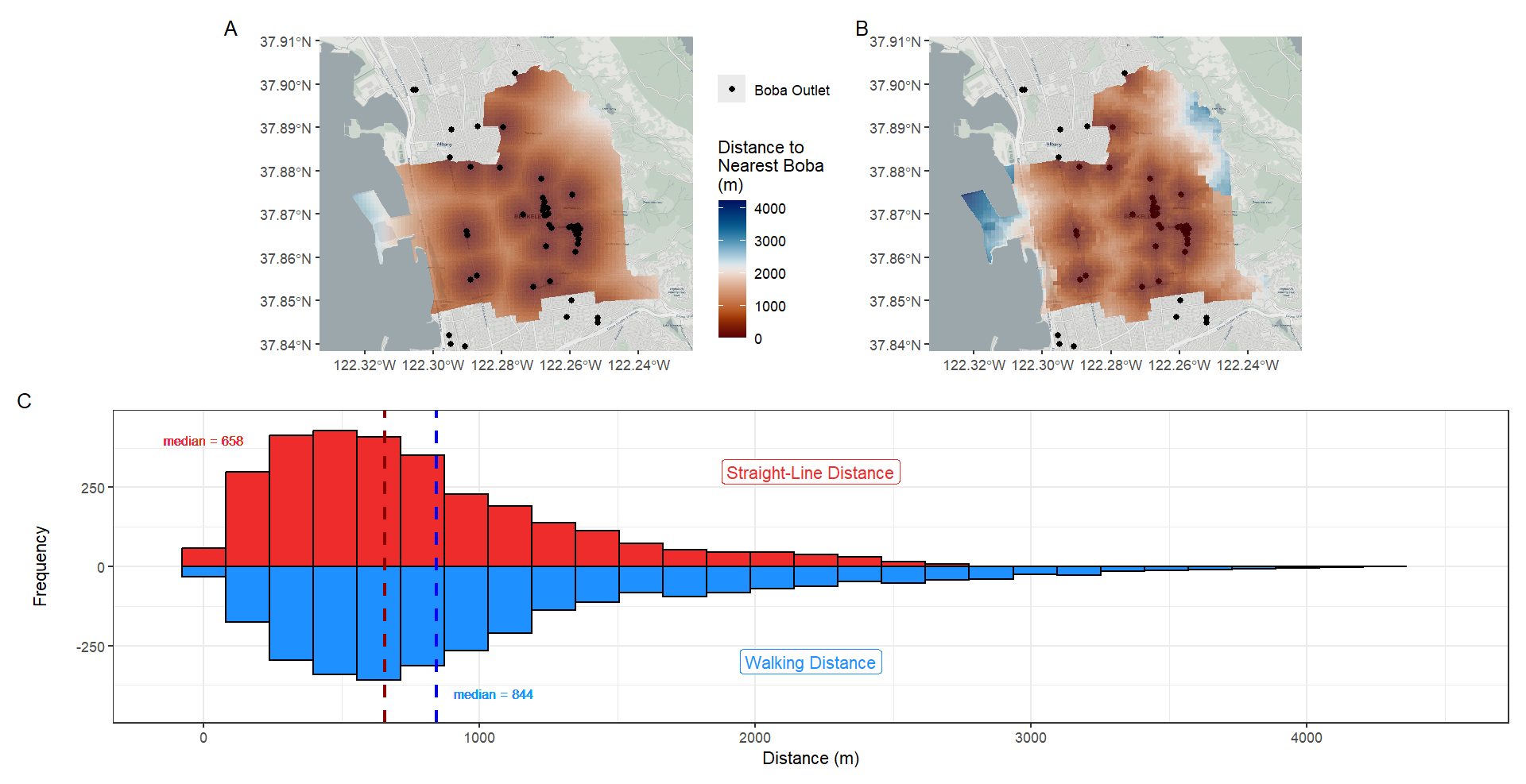

When accounting for retail locations just outside of the city, only a few areas of Berkeley were farther than 1km from a boba outlet. Dividing the city into a grid of 100m x 100m squares, 73% fell within this distance. Less than 5% were farther than 2 km, with most of those lying deep in the residential areas of Northeast Berkeley or at the Berkeley Marina. When calculating the walking distance to account for the road network, more than half (59%) were still within 1km walking distance, while locations more than a 2 km walk rose to 14%.

Discussion

Spatial Analysis

Given the latest population estimate for Berkeley by the state for 2025 (128,348), the 41 boba destinations per 10,000 residents would be 3.2, exceeding that of any city reported by a 2023 SF Chronicle-published analysis. At that time, Berkeley was 10th for U.S. cities at least that many residents. Some of this increase in the count of outlets represents continued growth in the space since 2023, evidenced by the 2025 openings of both large global chain franchises like Hey Tea and up and coming local purveyors like Binge Coffee House. Other top boba cities seem to have also seen increases in the number of active outlets (2023 SF Chron report -> 2025 API request: Berkeley - 17 -> 24; Garden Grove - 37 -> 42; Sugarland - 23 -> 32; Pasadena - 28 -> 34). But it’s likely that my methodology could have identified a number of locations never recorded in the 2023 analysis, and that the same process could reveal much large counts in those other cities as well.

The high concentration of outlets around the Berkeley campus is unsurprising given the heavy presence of those of Asian descent and young people who go to school and live in and near that region and compose a large portion of the boba market. But as the distance maps show, most Berkeley residents should be just a short walk to the nearest boba source, should a hankering arise. The concentrated clustering of boba service points is not at all limited to Berkeley, but rather an oft-repeated phenomenon. This is despite the fact that some level of cannibalization of business must exist between them. One would wonder how strong the advantages of specific siting characteristics are that would exceed the impacts of such close competition.

There are also some curious things to note in the stated composition of these outlets. A number of these outlets were not tagged as food establishments, but further investigation revealed that even some of those serve food ranging from bubble waffles to basque cheesecake to sushi tacos. I’d also note that a number of the locations tagged restaurants don’t have dining areas. This does lead to questions about how sensitive and specific these category determinations generated by Google are. Also, while the definition of a chain often varies, manual searches assessed that 15 of the 41 outlets (37%) appeared to be single-location spots and thus not part of a chain.

Boba Outlet Identification

While the direct Places API request did perform very well in identifying boba vendors and was predictably fairly up to date on closures, even that method reported a couple of establishments that fell outside the bounds of the request. Given that one of those errors was an establishment specializing in tea, I’d speculate that the manner in which the query parsed the “bubble tea” query text was at least partially to blame. Also, identifying restaurants that serve boba as an accessory to their main offerings can be difficult given the lack of boba mentions in reviews or other kinds of data aggregations that might inform the API and other queryable sources. This seems clear given my need to add a number of boba outlets that were restaurants manually due to their absence from the API-provided list.

For those looking to the publicly available LLM-powered chatbots to produce a comprehensive list like this, it seems they might provide a quick starting point. But the 63% identification rate achieved by the most sensitive iteration implies that more work would still need to be done to create a satisfactory compilation.

Google Gemini

OpenAI ChatGPT

According to responses and logical reasoning when supplied, initial LLM strategies focused on standard web searches using keywords like “boba tea shops open in Berkeley CA” (Gemini) & “bubble tea shops in Berkeley CA” (ChatGPT). Gemini did not share which resources it relied on, but ChatGPT mentioned sites like Yelp, food delivery services, Reddit, newspapers, local commerce business directories, and relevant lists already compiled online. From there, they seemed to work to narrow given the requirements of being still in operation, typically relying on searches for individual businesses. I also leaned on Yelp and food ordering websites to compile the final list, so I don’t think the resources themselves can be faulted too much.

The basic iterations of the LLMs initially performed more poorly than the more direct Places API request. Part of this might be related to a token limitation in generated responses that curtails the amount of information it can assess and deliver in a message. A partial remedy for this seemed to be to use multiple queries to ask for the information in pieces, here exemplified by the 5-at-a-time strategy. Reading from the logical reasoning explanations, this also gave the LLMs a little leeway to return the highest quality answers. However, even this technique may have its limits, as the Gemini’s documented reasoning stated it could access the first 6 of its responses but not the 7th in its 8th attempt to respond to the query, and added repeats to the list. Ultimately, dividing the request into smaller pieces did register more identifications, but had wide-randing impacts on the number of errors made.

Admittedly, I would not say this task was an easy one for chatbots to accomplish given that web searches seem to be their primary tool to access data. Boba vending is a rapidly shifting space, so figuring out which outlets are currently operational poses some difficulty. Point of fact, 2 qualifying outlets have closed within the short time I’ve been working on this analysis. Locations can also change names due to franchising swaps, and closures of one location are often followed by the opening of another outlet in the same location and obfuscate the operating status at an address.

Some observed identification errors seemed explainable. For example, one incorrectly identified location did not serve boba, but sold canned non-bubble tea branded by a popular boba chain. Copilot also falsely identified an outlet called “Berkeley Boba Cafe”, but that may have been the result of another, now inoperational vendor, calling itself “Berkeley’s Boba Cafe” on its website.

But there were instances where chains never present in Berkeley were inexplicably linked to the city, and nothing in the reasoning explained where that confusion came from. While its corroboration efforts would often erase that error before the response was ultimately submitted, it calls into question how each LLM is parsing the search results it finds, such as whether it is confusing items listed together in resources.

Ultimately, however, the underwhelming identification rate might be a result of the use of computational power to try to deliver coordinates, which posed great difficulties as described below. It might be good to re-evaluate their abilities without this requirement to produce a better comparison of the LLMs’ abilities in this domain.

LLM Geolocation Issues

Additionally, from the Copilot responses and the reasoning provided by Gemini and OpenAI, it is clear that requesting geographic coordinates was particularly taxing given the limits of what these models had access to. Gemini’s 2.5 model first provides coordinates for the first 5 in the list. However, reasoning from later in the conversation states that it does not have the access needed to deliver coordinates, and in the documented reasoning it eventually admits that the coordinates it originally provided were hallucinations. No warnings about this were given in the actual responses, however. ChatGPT tried to report coordinates, and the 5-at-a-time responses tried to report where it got the locations and that some were approximations. A check of coordinates reveals that some were indeed quite inaccurate, as were the ones provided in the basic queries. Copilot stated outright in its responses that any coordinates it gave were for demonstrating the CSV formatting and to consult an API service for real location data.

For the models with reasoning provided, the typical stated tactic for the ChatGPT and Gemini LLMs was to get an address and work from that to geographic coordinates. In doing so, it does make some confusing declarations, such as that an address is missing from retailer websites when clearly present, implying that information extraction is sometimes non-trivial for LLMs at this level. As for coordinates, it occasionally resorts to less orthodox methods to extract coordinates, such as from Mapquest URLs and realty websites. It did seem to have access to geocoding websites (e.g. LatLong.net, Geocords.com) but only seemed to be able to use it to access coordinates for the Berkeley City Center, and not specific addresses. Other approximations, such as assigned UC Berkeley coordinates to an off-campus outlet, were performed with little to no notification to the user. Ultimately, the lack of location data could spur hesitancy in reporting establishments for the bots, even if they had high confidence that it was a boba service outlet. ChatGPT, in its first attempt to generate an entire list at once, found 22 boba outlets by the end of its reasoning process, but only reported 8, as the predilection to provide full entries overwhelmed the given prerogative to report all outlets.

Closing Remarks

I observed that Berkeley boba service points substantially exceed what has been previously reported, and that the city realistically could have the most per capita of all U.S. urban regions. But compiling that total required a bit of elbow grease, as both the Google Places API and free LLM-based chatbots could not deliver a comprehensive ready-made compilation when prompted to list them all with geolocation data. Furthermore, those specific tools needed a little oversight to ensure that the answers they do provide are accurate to prompt requirements. Perhaps the more advanced iterations of the chatbots or elaborate prompting could produce better execution, but the probabilistic nature of LLM responses may just mean that occasional inaccurate generations are difficult to avoid.